Please write docs with a theory of mind

I know this story is going to be a familiar one. I have to do some work and learn that an engineering team had built a tool/system that does the majority of what I need. Said tool was developed for that team's own purposes, but it was a similar enough use case that a lot of the infrastructure and technical hurdles were already solved. I just had to read the onboarding docs (which the team had helpfully sent me!), figure out how to re-cast my existing data and use cases to fit their infrastructure, and run it! Simple!

I'm sure you know exactly how reality played out.

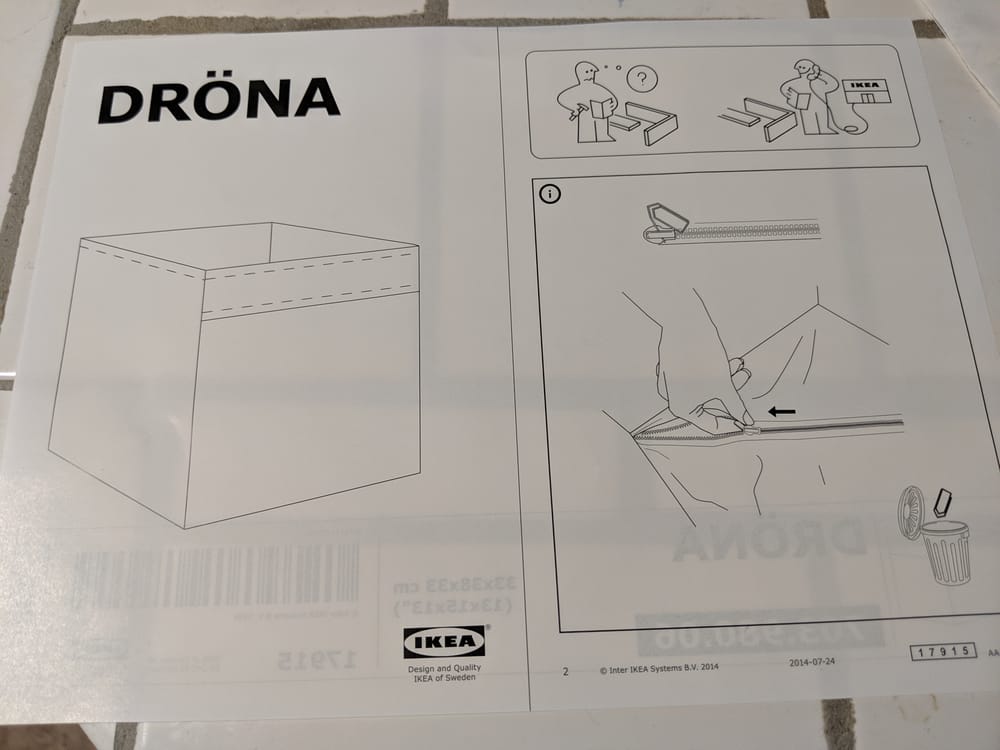

As you can imagine, things ... did not work as expected. The onboarding docs did exist. There were even a few videos that were recorded by someone who was showing off features about how to click various buttons and browse various data views. The only problem was that it made about a hundred assumptions about the environment a user might be in. Did the software environment have the correct software and components installed? Did it have the correct data tables in place? Were a bunch of completely undocumented backend services already set up? What version was everything being run? If all of these little details, and dozens more, were out of place, then the documentation makes zero sense because the buttons being described don't exist in the UI. Same goes for all the video showing off features. In a way, until I managed to grab an engineer and have them walk me through how to access the system, the docs may have well been describing how to construct the pyramids.

At the end of the day, this was pure documentation failure. Now, I know how difficult it is to have good documentation, especially for complex systems with lots of different moving components that are all being updated. Such complex systems usually means that not even LLMs have all the context needed to write actual good documentation without help. Much as I know that engineers hate writing docs and would love nothing more than to offload that work to a machine, it's extremely difficult to do because production software is an interface that places a facade over any number of systems, abstractions, and layers of logic. So far, I haven't really see any system that can look at the whole picture holistically and explain to a user how to do certain things.

But even if such systems had all the code context sorted out, and even the UI pre-rendered in a way that a magical model can "see" where everything is and know exactly which buttons to click, the documentation problem still has one last hurdle – LLMs don't have an actual theory of mind of the reader.

When I write a newsletter post, or my colleagues wrote the official documentation for a GCP product, we all had a theory of mind for the target audience. What kind of background do they have? What terms are they going to be familiar with and what will we have to explain? Why are they here reading the docs? What would they be trying to accomplish? Having those people in mind has a huge effect on what you explain within the documentation.

And it's not just about imagining what people should not know. An LLM can spin lots of moonbeams pretending to be writing for this persona or some other one. The problem is that whatever person you describe for the LLM to take on, it's still completely unmoored from actual experience. The real work is in actually learning about the audience over time, getting a sense of what they know, and then anticipating their needs beforehand. Then you go and ideally test the documentation with that audience to see just how far off the mark you were. If it sounds like a lot of work, it is!

But over the years, I've internalized various patterns of documentation that are useful to have when handing data work off to someone else. I figured today's a good of a time as any to write them down.

For the complete stranger

This is sorta my default mode because it's the most thorough and gets the most amount of people to where they need to be.

Often I need to hand work off to someone who I have to assume has no idea what is going on. They might actually be a data practitioner, but at the very least they're going to be absolutely clueless as to what the blob of code I'm handing to them does, what's in the data, why does any of this exist in the first place. Y'know, the important little metadata bits that surround a project.

In these situations, my first priority is to transfer a mental model of the whole project to the reader. I'm doing my best to paint the situation in their mind so that they can start anticipating where things are and why certain decisions are made. With a good enough mental map of where data comes from, how the code steps through an analysis, how results are made, then it becomes much easier to go into the specific code itself and understand the comments there. That way, I don't have to repeat and update myself too much about what's going on in the code because the overall system design is more durable.

For the code side, I imagine what it's like for someone who comes with a brand new, empty, computer. What would they need to know to get to a working state? If they need access to certain systems, where should get they it. Can someone following instructions blindly get to a working state?

For the data side, I have to write up a data dictionary of sorts. Where did the data come from, what quirks should they know about. What important caveats do they need to know so they understand what claims they can and cannot make. While in theory if they read the code in detail they might be able to figure out, we all know that doesn't normally happen unless something has gone horribly wrong.

For the teammate that's borrowing code

Going in the complete other extreme, I sometimes have to transfer code to someone else. I can make lots more assumptions about their environment, familiarity with terms, and systems access. That lets me take a bunch of shortcuts.

Now, instead of having to transfer the mental model of the entire project, I can cut right to the chase and just transfer the mental model of the system architecture. Code goes here, it's invoked in this way, here's the high level algorithms it uses and what systems it needs access to. Data lives there, it comes from all these places, the most important definitions are in this place. Important caveats are listed somewhere too because we're data folks and we live on caveats.

My expectation when sending this sort of code out is that my colleague wants to do one of either two things. They either want to re-run my code in full, so I need to document how to invoke the code and how the inputs/outputs work. Or they want to yank some bits of my code for their own purposes, which my architecture explanation should help them find without too much struggle.

For researchers

I've a special mode for when I need to prepare things for someone who I know is doing research. My assumption is that they're going to take the data and code and do whatever it is they need, and it's likely got little to do with whatever analysis code I've already written. So the needs shift to what I expect their needs to be: data provenance and advice for data cleaning.

I'll take extra time to explain what all the data fields mean, how they're collected, any known bugs or issues that should be addressed. I also tend to be extra detailed in describing exactly what is going on during the data collection because it can often make a difference in how the data is interpreted. I'll also try to warn about transient issues like "during this week we had an outage in one region!".

I'll also put more energy into documenting the data cleaning processes since very often there's lots of hidden gotchas that lurk in the raw data and are smoothed over in post-processing. While it's always good practice to write data cleaning decisions down, industry tends to make some leaps with assumptions that may not be fully justifiable in a more rigorous setting.

For students

Meanwhile, if I have to teach people something, things change yet again. This time, I'm massively stripping away distractions. It's hard enough to learn something without constantly tripping over side details. Sometimes, it means I have to make specialized code and data samples to skip over things I don't want to spend time on.

Data cleaning steps are the most common thing I'll gloss over, usually by including the code and generating an intermediate clean data set. The cleaning steps exist for reference, but then I can just skip over it and focus my attention on teaching whatever topic I'm actually trying to focus on. We all know that any discussion of data cleaning can potentially derail things for whole days – it's probably why so few classes bother teaching it.

For stakeholders who haven't earned my trust in data handling

We've all had stakeholders who request data to work with on their own. Sometimes those stakeholders know what they're doing and can be trusted to do thoughtful analyses. Other stakeholders can barely divide two numbers together in Excel and can barely be trusted with even that.

Trusted folk get nice, cleaned up datasets with explanations on what they're getting. Depending on their skill level, I'll make sure they understand the limitations of what the data can say so that they make the best use of it. But since I trust them to use the data (or come to me with questions), they can get some pretty fine-grained stuff. There are some product managers who I wholeheartedly would trust with a largely raw, row-level dataset because they've shown that they can use pivot tables and sound reasoning to arrive at solid conclusions.

For the people who aren't trusted, they get much more tightly controlled data set where I do my very best to only give data that can't be abused for too much. They'll get explanations for what things are and enough room to do various analyses they want, but they're most definitely not going to get everything. I'll often also do the cleaning for them with included documentation because that's the biggest source of potential error and unintentional misuse.

It's all grounded in people

There's plenty of other people you can come in contact with that need different types of documentation. Finance folks typically need help translating their conventions and needs to whatever data engineering had put together. Even though money fields and dates are everywhere in data, there's extremely particular needs around those fields in a finance context. Just like how it's super important for us when your paycheck is deposited and when the funds actually clears and is yours to use.

Overall, all these habits I've built over the years come from working with various actual people and realizing that they need different levels of documentation. Failing to anticipate those needs usually results in extended conversations to figure out why they can't seem to get what they need from me. Since I don't like having to revisit work due to miscommunication, I spend a lot of brain cycles trying to get ahead of them.

And so when I get put on the other side of the table and receive docs that make absolutely no sense to me... I get really, really, disappointed.

Standing offer: If you created something and would like me to review or share it w/ the data community — just email me by replying to the newsletter emails.

Guest posts: If you’re interested in writing something, a data-related post to either show off work, share an experience, or want help coming up with a topic, please contact me. You don’t need any special credentials or credibility to do so.

"Data People Writing Stuff" webring: Welcomes anyone with a personal site/blog/newsletter/book/etc that is relevant to the data community.

About this newsletter

I’m Randy Au, Quantitative UX researcher, former data analyst, and general-purpose data and tech nerd. Counting Stuff is a weekly newsletter about the less-than-sexy aspects of data science, UX research and tech. With some excursions into other fun topics.

All photos/drawings used are taken/created by Randy unless otherwise credited.

- randyau.com — homepage, contact info, etc.

Supporting the newsletter

All Tuesday posts to Counting Stuff are always free. The newsletter is self hosted. Support from subscribers is what makes everything possible. If you love the content, consider doing any of the following ways to support the newsletter:

- Consider a paid subscription – the self-hosted server/email infra is 100% funded via subscriptions, get access to the subscriber's area in the top nav of the site too

- Send a one time tip (feel free to change the amount)

- Share posts you like with other people!

- Join the Approaching Significance Discord — where data folk hang out and can talk a bit about data, and a bit about everything else. Randy moderates the discord. We keep a chill vibe.

- Get merch! If shirts and stickers are more your style — There’s a survivorship bias shirt!